- #Do we need to install apache spark install

- #Do we need to install apache spark software

- #Do we need to install apache spark mac

#Do we need to install apache spark install

To install from the CLI running the following IF the downloads have been authenticated. Verify the downloads to ensure they are authentic. Since I don’t have either I downloaded both, but will install Spark with Hadoop version 2.7. Once on their downloads page select the versioning combination (Spark & Hadoop) you would like to download. To get Apache Spark directly, visit their website at and navigate to the “Downloads” page () source ~/.bashrc Getting Spark from Apache This will allow you to reference Spark using $SPARK_HOME in the command line, but other applications will also use this to launch Spark as well. Now source the file to make SPARK_HOME and JAVA_HOME available. export SPARK_HOME=/home//spark/ # spark-3.0.1-bin-hadoop2.7 # optionalĮxport JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64Įxport PATH=$PATH:$SPARK_HOME/bin:$JAVA_HOME/bin If you unpack the downloads in either method below to just reside in the higher level “spark/” directory, its unnecessary to include the additional refinement. You can perform this part after if you would like as well. I already inserted what the installed Spark instance directory looks like here. nano ~/.bashrc # or /etc/profileĪt the bottom of either file, insert the following commands. To do that we first open either the /etc/profile or the ~/.bashrc files. Next, we add the directory location to the PATH. # Create a spark folder in the home directory I derived this step after reviewing the materials in a couple resources. I created a directory and assigned an environment variable.

Next we prepare for getting Spark on the local computer.

#Do we need to install apache spark software

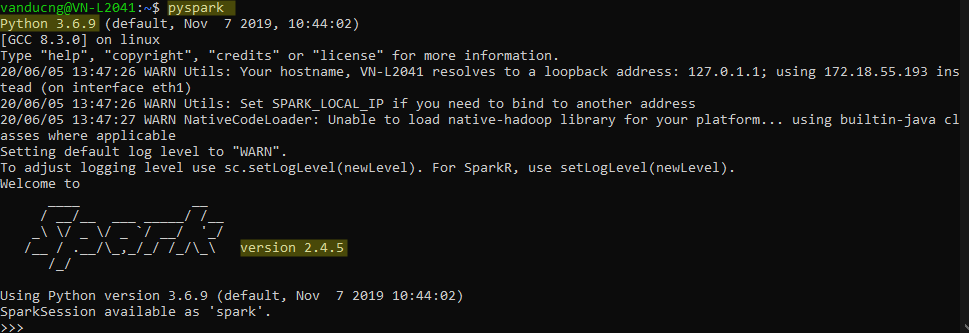

To look at all the different options just double tab after the openjdk- to give you a list of the software options. You should only need the JRE but if you want additional functionality, you can go with openjdk-8-jdk. sud apt install openjdk-8-jre # or whichever version you need 8, 11, 13, 14 If you don’t have java on your machine you can get it from the java website or apt. Depending on how you installed it, it will likley be in /usr/lib/jvm/. To locate your Java/JVM path use the following command in the terminal. I have executed the following post with Java 8 (OpenJDK AMD64) I have both java 8 and 11 installed on my machine. You need to have Java install on your machine and the JAVA_HOME environment variable should be defined. Details on each method will be provided in the following subsections. The first is directly from the Apache website, the other is through the R package sparklyr. There are a couple routes to getting Apache Spark.

#Do we need to install apache spark mac

The process should be similar to other Linux distributions as well as Mac and Microsoft environments. I am currently using Linux/Ubuntu 20.04 so the instruction are tailored to my environment. Installation errors, you can install PyArrow >= 4.0.In this post I will go over installing Apache Spark and initial interactions from within R. If PySpark installation fails on AArch64 due to PyArrow Note for AArch64 (ARM64) users: PyArrow is required by PySpark SQL, but PyArrow support for AArch64 If using JDK 11, set =true for Arrow related features and refer Note that PySpark requires Java 8 or later with JAVA_HOME properly set. To install PySpark from source, refer to Building Spark.

To create a new conda environment from your terminal and activate it, proceed as shown below:Įxport SPARK_HOME = ` pwd ` export PYTHONPATH = $( ZIPS =( " $SPARK_HOME "/python/lib/*.zip ) IFS =: echo " $ " ): $PYTHONPATH Installing from Source ¶ Serves as the upstream for the Anaconda channels in most cases). Is the community-driven packaging effort that is the most extensive & the most current (and also The tool is both cross-platform and language agnostic, and in practice, conda can replace bothĬonda uses so-called channels to distribute packages, and together with the default channels byĪnaconda itself, the most important channel is conda-forge, which Using Conda ¶Ĭonda is an open-source package management and environment management system (developed byĪnaconda), which is best installed through It can change or be removed between minor releases. Note that this installation way of PySpark with/without a specific Hadoop version is experimental.

Without: Spark pre-built with user-provided Apache HadoopĢ.7: Spark pre-built for Apache Hadoop 2.7ģ.2: Spark pre-built for Apache Hadoop 3.2 and later (default) Supported values in PYSPARK_HADOOP_VERSION are: PYSPARK_HADOOP_VERSION = 2.7 pip install pyspark -v